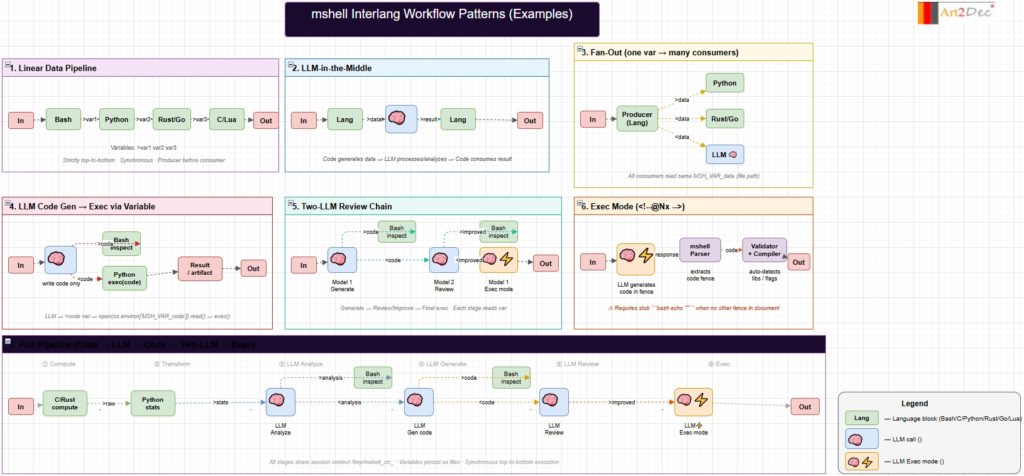

This diagram documents the core workflow patterns available in the mshell Ecosystem — an AI-powered polyglot shell that executes Markdown documents containing mixed-language code blocks and inline LLM directives. The diagram covers seven patterns, arranged from simple to complex.

The first three patterns (Linear Data Pipeline, LLM-in-the-Middle, Fan-Out) represent foundational data flow: code blocks in different languages pass data to each other through named session variables (>var / <var), with an optional LLM processing step in the middle, or a single producer writing to multiple consumers simultaneously.

The next two patterns (LLM Code Gen → Exec via Variable, Two-LLM Review Chain) show more advanced LLM integration: a model generates executable code that is stored in a variable and then run via exec(), and a multi-model pipeline where Model 1 generates, Model 2 reviews and improves, and Model 1 finalizes in exec mode — mirroring a human code review cycle.

Exec Mode (<!–@Nx–>) is a unique mshell primitive: the LLM response is intercepted by the mshell Parser, the code fence is extracted, and the Validator automatically resolves compiler flags and library dependencies before running the binary.

The bottom-spanning Full Pipeline combines all stages into a single chain: C/Rust computes raw data → Python transforms it into statistics → LLM analyzes → LLM generates visualization code → LLM reviews → Exec mode compiles and runs — all driven from one .md file without any manual editing.

All patterns execute strictly top-to-bottom and share a session context at /tmp/mshell_ctx_<pid> where variables persist as plain files for the duration of the process. These are example patterns for the synchronous usage patterns without extension.