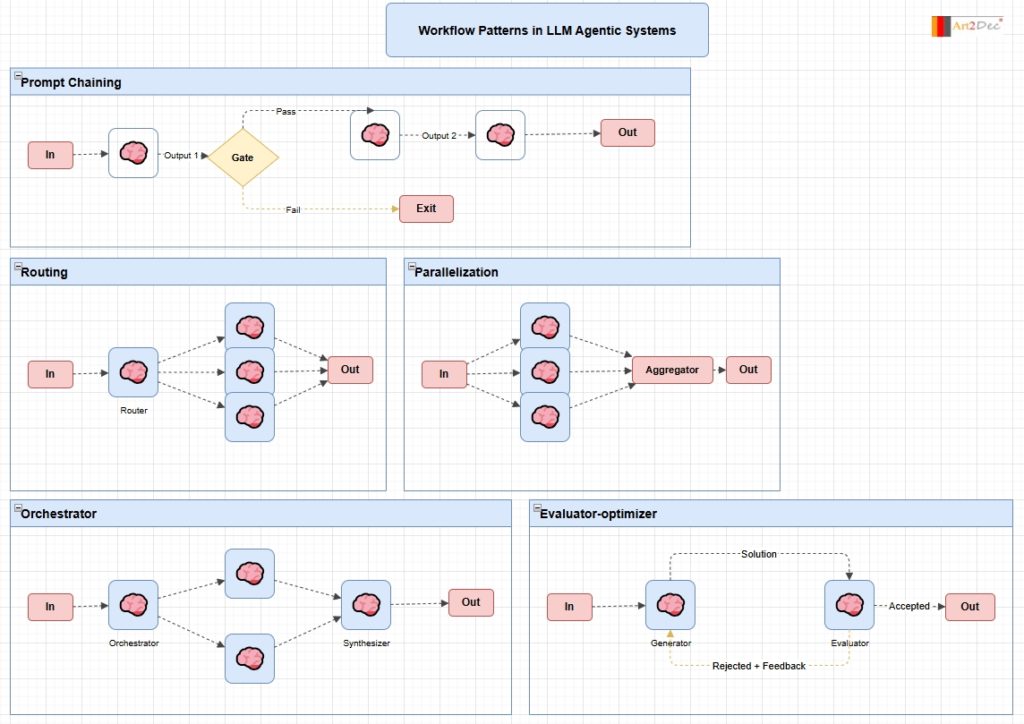

This diagram illustrates five canonical architectural patterns for building multi-agent systems with Large Language Models, following the taxonomy popularized in agentic AI research.

Prompt Chaining is the simplest pattern: a sequence of LLM calls where each output feeds the next input. A Gate node introduces conditional logic — on Pass the chain continues through additional LLM stages, on Fail the flow exits early. This is the foundation of any multi-step reasoning pipeline.

Routing uses a dedicated Router LLM to classify the input and dispatch it to one of several specialized downstream agents. Only one branch executes, making this suitable for scenarios where different input types require fundamentally different handling.

Parallelization fans the input out to multiple LLM agents running independently, then collects their outputs through an Aggregator. This is used to increase throughput, generate diverse perspectives, or split a large task into independent subtasks.

Orchestrator extends parallelization with explicit coordination: a central Orchestrator LLM plans and delegates subtasks to worker agents, then a Synthesizer LLM merges the results into a coherent final output. This pattern is common in complex research or code generation pipelines.

Evaluator-optimizer implements an iterative refinement loop: a Generator LLM produces a candidate solution, an Evaluator LLM scores it, and if the result is rejected the feedback is sent back to the Generator for another attempt. The loop continues until the Evaluator accepts the output, enabling self-correction without human intervention.